For better or worse, artificial intelligence (AI) is being rapidly deployed across the business and HR world, and beyond.

But in HR and payroll compliance – where clarity of interpretation and audit defensibility are paramount – the question isn’t whether AI is impressive.

It’s whether AI is appropriate for these critical functions.

As organisations confront what was only recently a cottage industry, and is now being styled as employment’s final frontier, we must also ask ourselves:

Does this meaningfully enhance workforce management, or will it exacerbate existing hairline fractures?

The state of affairs

According to McKinsey’s The State of AI report, adoption is on the rise.

88% of global organisations surveyed routinely leverage artificial intelligence across at least one business function, compared to 78% just a year prior.

On the deployment spectrum, 39% are currently experimenting with AI agents, whereas 23% are “scaling an agentic AI system somewhere in their enterprises.”

Broad as the movement may appear, at this stage, it tells us nothing about efficacy.

In order for AI agents to make a successful impact within the arena of workforce management, they will have to address the following tensions endemic to HR and payroll compliance:

- Regulatory change across multiple jurisdictions

- Heavy reliance on manual interpretation of legislation

- Fragmented data systems that fail to link documentation with decision-making

- Compliance teams balancing assurance with operational firefighting

- Rising reputational exposure linked to payroll &workforce practices

“It’s a tool, not the outcome. We’ve positioned AI as being the outcome far too much over the last few years,” offers Sean Donnelly, Chief Technology Officer of Xemplo.

“The market likes to hear us talk about AI. But to use a term as generic as ‘AI’ is like saying we use data storage. We don’t go around shouting that we run on servers.”

Tim Stapleton, Xemplo Head of Product, echoes similar sentiments: “There aren’t many AI-powered levers in our space that pulling would fundamentally change the outcome.”

“People get addicted to AI because it scratches that itch of arduous tasks suddenly being made easy. Which is fine for a lot of things – this is where it can fall apart.”

– Tim Stapleton, Head of Product (Xemplo)

What AI brings to the table

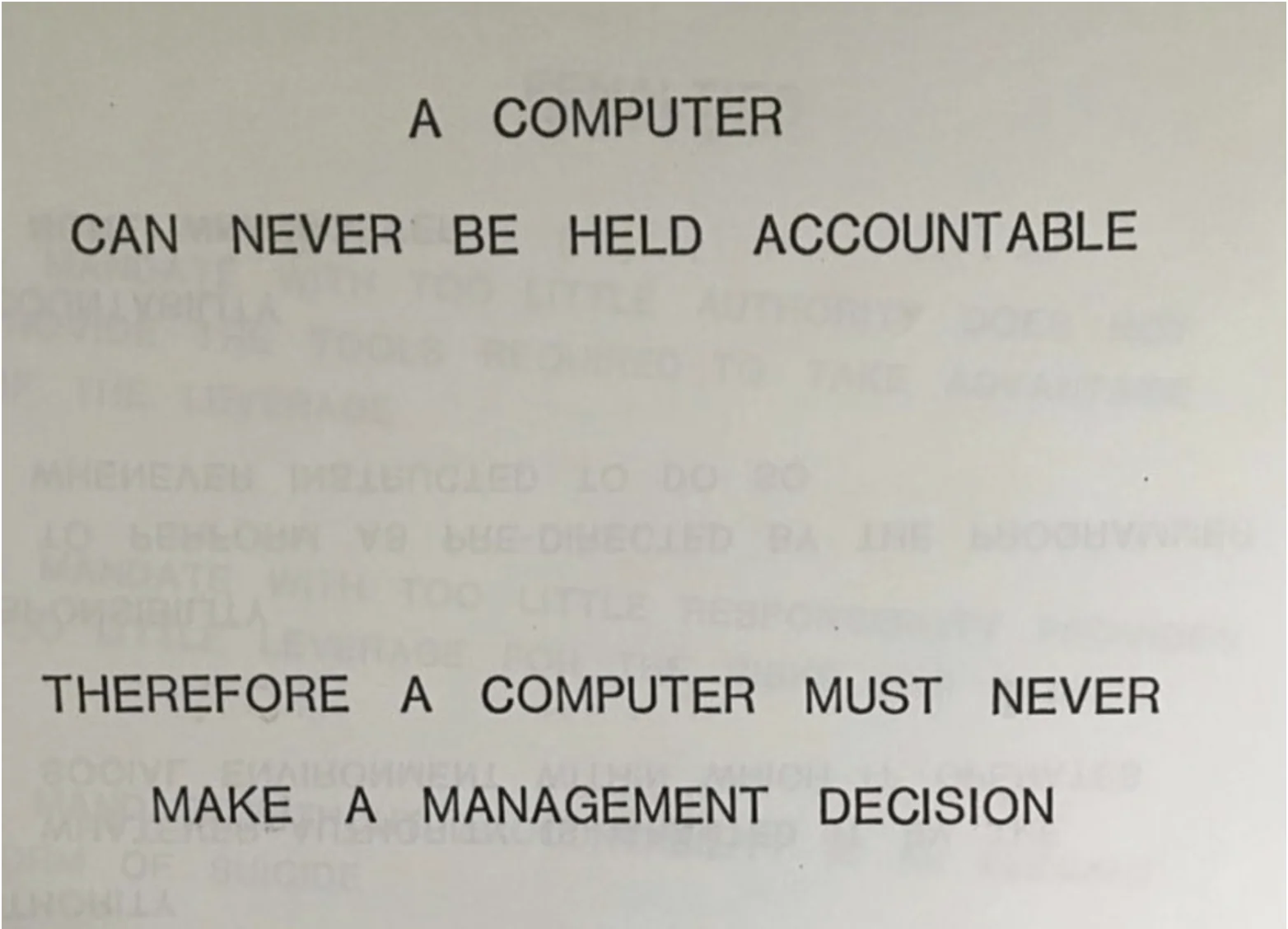

Despite the workload delegation, artificial intelligence does not and will not remove accountability. As per IBM’s 1979 training manual, “A computer can never be held accountable, therefore a computer must never make a management decision.”

“The person who pushes the buttons is responsible. You cannot say it was ChatGPT’s problem; it’s not their problem,” affirms Stapleton on AI’s lack of legal personhood.

What it is positioned to change, however, are the mechanics of control.

The inordinate time spent on legislative interpretation at scale, once dedicated to analysing the minutiae of policy updates and relevant clauses, can be cut down dramatically via language processing.

“There’s definitely value in evaluating contracts for conditions and clauses. Checking positions and comparing them to industry awards,” observes Donnelly.

Machine learning is more than capable of being deployed to monitor payroll transactions 24/7, flagging deviations and anomalies from expected patterns that might otherwise go unnoticed by the naked eye.

By the same token, predictive risk modelling identifies indicators of compliance breaches before they escalate.

AI-enabled workforce analytics, on the other hand, can streamline performance management, slowing turnover and thereby mitigating erosion of institutional continuity (departures signify lost knowledge, weakened consistency, and heightened exposure).

“Using AI to make sense of data and reduce busywork means we can focus on more strategic tasks than fiddling with pivot tables and VLOOKUPs,” says Stapleton.

“The core process, though, needs to remain the core process to ensure data quality and compliance. You still need to verify the assumptions.”

– Tim Stapleton, Head of Product (Xemplo)

Rewriting reactivity

At the strategic core of this revolution, artificial intelligence provides a direct pathway to proactive HR and payroll compliance, rather than the reactive and retrospective endeavour we’ve largely come to know it as.

Granted, platforms such as Xemplo have already been leading the way in automation, business analytics, and due consideration to Box 1 – the upstream component that determines payroll health, covering correct worker classification, contract setup, and award mapping before a single pay run is processed.

But a forward-looking approach potentially enabled by AI ensures:

- Risk indicators are constantly monitored

- Controls are stress-tested through scenario modelling

- Resources are allocated based on predicted exposure

Such a paradigm shift would effectively allow compliance leaders to stop being firefighters, and focus on top-line risk interpretation and governance design.

“I reckon most of an HR manager’s time is just chasing people for forms, ticking boxes, and completing paperwork,” remarks Sean Donnelly.

AI’s most immediate contribution may lie in transforming how businesses understand their own capabilities by improving workforce visibility. Think skills data, role definitions, and compliance requirements interlinked to one another instead of being stored as static records.

Organisations that practice dynamic AI-facilitated oversight could:

- Constantly update profiles based on performance data & market developments

- Zero in on skill gaps before they crystallise into operational or compliance risk

- Model workforce scenarios for regulatory change, automation exposure, or jurisdictional expansion

- Target upskilling towards areas of predicted exposure more deliberately

Faustian bargains for efficiency

.jpg)

Artificial intelligence is not a silver-bullet solution. In payroll and employment law, where outcomes must be precise and explicable, ambiguity leaves you in jeopardy.

Algorithmic bias derived from historical workforce data, for one, may embed inequities – particularly in pay, progression, or classification.

“The unique part about payroll outcomes is that it’s very deterministic,” explains Sean Donnelly.

“People always talk to us about using AI to calculate payroll and awards. But I can have ten people in a room right now to talk about the award structure in Australia – just one award – and they’ll spend hours arguing over interpretations.”

“You’re leaning on AI to create its own interpretation of a condition. How do you create rules for something that’s neither defined nor clear?”

– Sean Donnelly, Chief Technology Officer (Xemplo)

A miscalculation is therefore not theoretical; it’s measurable, reportable, and in some cases, prosecutable.

“There will always be a need for systems of record, the source of truth – like a master ledger in a bank. Data integrity needs to remain locked down,” states Tim Stapleton.

AI applied at the payroll stage cannot correct flawed onboarding data, misclassified roles, or poorly crafted contracts. Compliance threats are often created upstream.

Machine logic, which is predominantly mimetic in its present-day iteration, may help surface anomalies or highlight risk indicators. But until the day it can illustrate transparent reasoning for an audit, accountability remains squarely human.

Donnelly adds: “Payroll’s very much around how we trace the auditability of a condition or outcome, and AI’s a bit of a black box… Try explaining that to Fair Work.”

“A major problem with AI at this stage is that it doesn’t know when it’s necessarily wrong, either. In a lot of cases, it doesn’t actually know. It’s a matching algorithm just looking for the closest match.”

At an absolute minimum, before the implementation of AI, organisations should:

- Audit workforce data structure

- Ensure payroll logic is deterministic and traceable

- Define clear human accountability for AI-assisted decisions

“Compliance leaders still need to serve as gatekeepers and vet AI outputs,” asserts Tim Stapleton.

“If you replace knowledgeable people with someone who only knows how to engineer AI prompts, you lose governance and critical gatekeeping.”

– Tim Stapleton, Head of Product (Xemplo)

The limits of automation

Interpretation and audit defensibility aside, human judgment and hard-earned expertise should be deemed absolutely essential in complex matters such as employment law.

Stapleton illustrates this point with employment contracts: “People see that as something they can go to ChatGPT for, because it’s one of those things they don’t historically know extensively. AI is filling a void, a lack of knowledge.”

“But it’s been cobbled together based on variables that may not even be aligned to the legislative framework, because it gathers information from anywhere and everywhere.”

The risk, in other words, isn’t just that AI “gets it wrong” – it’s that it does so confidently in a domain where errors are measurable, reportable, and in some cases prosecutable.

Purpose-built compliance platforms address this by anchoring their logic in the legislative framework from the outset, rather than drawing on whatever training data happens to be available.

“The real risk posed is to more junior roles,” notes Sean Donnelly.

“Developers using AI might generate bigger outputs without knowing what ‘good’ looks like – they haven’t got the runs on the board to judge quality. It’s a dangerous place to operate. People can be killed by convenience, relying on tools without developing skills.”

Donnelly continues: “I was looking at another platform the other week, and they were saying, ‘Oh, the AI agent can remind you of things that haven’t been done or send reminders of these activities.’ The software already does it, Xemplo already does that.”

“Is it really better or are we reinventing the wheel?”

– Sean Donnelly, Chief Technology Officer (Xemplo)

Lest we conflate newness with efficiency and transformation. Speed without structure, after all, only introduces fault lines.

How secure is secure?

.jpg)

Another dimension to keep in mind is dual oversight: compliance leaders must assume they will also be required to shoulder the growing burden of AI governance.

In addition to centralising vast volumes of sensitive HR data – something that increases vulnerabilities unless a platform is ISO/IEC27001:2022-certified for enterprise-grade security – are we truly prepared to become custodians of this rapidly evolving technology (as well as the governance frameworks that come along with it)?

“The foundation must start with security. Decide what you’ll allow AI to do and put fences around it before even thinking about implementation,” cautions Tim Stapleton.

“Access control is also critical, even within acceptable datasets. You don’t want someone who didn’t have access to a resource suddenly gaining access because AI surfaces it in response to a question. That’s very difficult to rein in once it happens.”

Data security and its associated regulations – not quality – appear to be the sole pressure slowing even greater adoption rates of artificial intelligence.

Approximately 79% of APAC organisations surveyed have indicated as such, according to The Economic Times, while 51% are directing payroll teams to focus on “strengthening security practices.”

The same report also revealed that 71% have faced payroll penalties “once or twice annually,” 80% struggle to keep pace with local regulations across territories, along with the fact that – as a result – 78% are considering outsourcing payroll to “better support multi-country workforces.”

Donnelly elaborates: “We already blame systems when payroll is miscalculated. It’s not wise for any AI company to take on that level of accountability.”

“We should be helping people make better decisions, not replacing the decision-making entirely.”

– Sean Donnelly, Chief Technology Officer (Xemplo)

If AI is applied to fragmented workforce planning, disconnected skill taxonomies, and inconsistent performance management, its outputs will only reflect those weaknesses – perhaps even exacerbate what begin as hairline fractures.

It should therefore serve as an operational tool and structural diagnostic, uncovering whether governance architecture is cohesive, integrated, and resilient, or procedural, siloed, and strained.

Foundation before forward progress

Much like the wider workforce, the adoption statistics suggest that artificial intelligence represents a crucial inflection point for HR, and by extension, payroll compliance.

Organisations that manage to integrate this vaunted innovation appropriately will be best positioned to anticipate compliance issues rather than merely respond to them after the fact. Though make no mistake: AI will not magically correct fragmented workforce data, unclear award interpretations, or inconsistent controls.

It’ll only magnify them by a conceivably untenable factor.

For HR and payroll leaders, despite the brave new world we’re being sold, the priority remains unchanged: build a structured governance architecture first, then apply AI where it can consolidate control, increase visibility, and provide a tangible underpinning for defensible decision-making.

That distinction between technology that enhances compliance discipline and technology intended to replace it outright will ultimately dictate who strengthens their position and who simply automates their risk.

%20HERO.webp)